Photo by Slejven Djurakovic on Unsplash

Photo by Slejven Djurakovic on Unsplash

Apple, one of the most profitable companies in the world is renowned for its beautifully designed products. In an age where computers were strictly used in a business setting, Jobs envisioned a seamlessly integrated computer that could be sold to the average consumer. Apple set out to make using a computer as simple as possible, investing heavily in graphical user interfaces which enabled users to navigate by clicking on icons as opposed to typing commands at a terminal.

Don?t get me wrong, I love Apple?s products but they end up charging a substantial premium on top of the underlying hardware. More often than not, you can get a lot more value for your money by building a computer yourself. In this series, I?ll attempt to explain the role of various computer components and what each of the advertised specifications mean. In the proceeding post, we?ll take a look at the central processing unit or CPU.

The central processing unit is the brains of the computer. Whether your streaming your favourite show, playing a MMORPG or reading email; everything that runs on your computer is ultimately reduced to a sequence of binary bits and processed by the CPU(s).

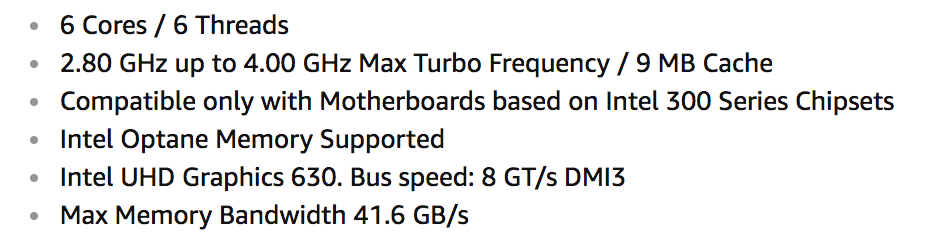

To help us understand the various advertised specifications of CPUs, we?ll use Intel Core i5?8400 Desktop Processor as a reference. The specs for said processor are as follows:

Clock Frequency

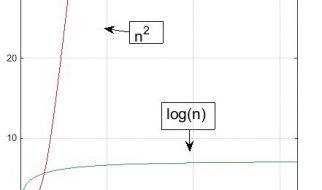

Thanks to Moore?s law, the average modern processors has a clock frequency of about 3-4.00GHz. The G in GHz stands for giga. In the metric system of measurement, giga is 1,000 times larger than mega (M) and mega in turn is 1,000 times larger than kilo (K).

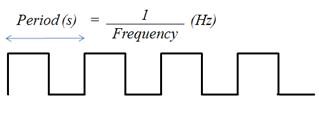

Frequency is best understood in terms of the period. Suppose we take the time at which a rising edge (transition from low to high) occurs as the start of our frame of reference, the period is the amount of time (in seconds) that elapses before the next rising edge.

In absolute terms, a clock frequency of 4GHz signifies that 4000000000 cycles occur every second (where one cycle is composed of the period where the signal goes from low to high, back down to low and then from low to high again).

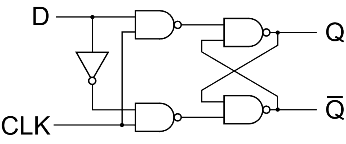

A core component of the CPU is the flip-flip. The circuit diagram for a D flip-flop looks something like this.

When the logic gates are put in the preceding configuration a special property arises.

In between clock cycles, flip-flops maintain state.

What do we mean by state? In essence, a flip-flop will store either a 1 or a 0 which can then be used by the combinational logic connected to its output to perform some kind of operation.

Let?s say we had two processors where one operated at 1Hz and the other operated at 2Hz. The second processor has twice as many clock cycles per second as the first one. In other words, the sequential logic (flip-flops) can take on twice as many values in the same period of time. The second processor could theoretically calculate the sum of two sets of numbers in the same amount of time it takes the first processor to compute one.

The frequency of the clock is limited by the critical path. The critical path is the longest sequence of straight combinational logic. If a rising edge were to occur before the combinational logic had enough time to compute the result, the input signals would change leading to a different result.

To elaborate, suppose the critical path consisted of a 4-bit adder whose input signals were each connected to the output of a register (a collection of flip-flops). If at the start of the period the state of the registers were 1000 (decimal 8) and 0010 (decimal 2), then we?d expect the sum to be 1010 (decimal 10). However, if the clock frequency were set too high, the internal state of the flip flops would change before the sum had made its way to the input of another register.

Cores

A core is a CPU. Ergo, when we read that the Intel Core i5?8400 Processor has 6 cores, it means that it has 6 CPUs executing instructions concurrently on a single physical chip. If each CPU supports an addition instruction, then the processor could calculate 6 distinct sums simultaneously.

Max Turbo Frequency

The two Goliaths of the semiconductor industry, Intel and AMD set out to create the world?s fastest processor. By using superscalar architectures and pipelining they managed to produce processors that operate at frequencies on the order of several gigahertz. However, as with most things, you can?t measure success based of a single metric. Speed although important, should not be pursed at the cost of everything else. With the advent of laptops and mobile devices, power consumption began to take a priority over speed.

The power bill aside, the more power a processor consumes, the more heat it generates and when a processor runs hot all the time, its lifespan is reduced. It?s for this reason that they created a distinction between nominal and turbo frequencies. Most of the time the processor will operate at the nominal frequency. However, for computationally expensive tasks such as gaming and video processing, it?s possible to redirect the power from multiple cores to a single core in order to boost its clock frequency to a predefined limit (max turbo frequency). Referring back to the Intel Core i5?8400 Processor, the nominal frequency that all cores can reach at the same time is 2.8GHz and the max turbo frequency that a single core can reach as long as power and thermal limits allow is 4.0GHz.

Cache

The other components that make up a computer do not operate at the same clock frequency as the processor. Modern motherboards, for example, tend to have a clock frequency on the order of 500MHz. Due to the discrepancy between clock frequencies, any time the processor has to go out to main memory to retrieve data, it ends up sitting idle for most of the time. It?s for this reason that modern processors make use of a cache on the physical chip. Cache takes advantage of spatial and temporal locality to reduce the amount of time the CPU(s) spends waiting on other devices.

Spatial Locality

Spatial locality refers to the fact that a range of addresses in close proximity to one another have a tendency to be used together. To elaborate, imagine the body of a loop being accessed repeatedly.

Temporal Locality

Temporal locality refers to the fact that certain sets of addresses will tend to be accessed at around the same time. For example, imagine two variables that although stored at addresses far away from one another are repeatedly accessed one after the other by some program.

By keeping the content of these addresses on the processor, the CPU(s) can continuously execute instructions without having to go out to lower levels of memory to retrieve more, provided they?re stored in the cache.

Size

We quantity the percentage of the amount of times the CPU(s) has to go retrieve data that isn?t cached as the miss rate. By increasing the cache size, we can store the content of more addresses in cache thus reducing the miss rate. After this explanation, one might be lead to believe that more cache is always a good thing. However, increasing the cache size also increase the seek time. The seek time is the amount of time the CPU spends searching for the data in cache. Given that you?d likely find the data your looking for 95% of the time, even a small increase in seek time can have drastic effects on the overall performance of the processor.

When shopping around, it?s safe to assume that the engineers who designed the chip determined the optimal balance between reducing the miss rate and increasing the seek time relative to the cache size.

Final thoughts

A higher the clock frequency and/or an increase in the number of cores will enable your computer to process more information in a given period of time. That being said, there?d be no real point of dropping $400 on a very fast multi-core processor if it?s constantly having to wait for your hard drive. As with most things you get what you pay for. A higher end processor will end up costing more. The readers of this post are encouraged to select a processor based off their needs and what their budget allows.