Explaining How To Obtain Optimal Hyperparameter Values

This article aims to explain what grid search is and how we can use to obtain optimal values of model hyperparameters.

I will explain all of the required concepts in simple terms along with outlining how we can implement the grid search in Python.

Photo by Evgeni Tcherkasski on Unsplash

Photo by Evgeni Tcherkasski on Unsplash

1. The Problem

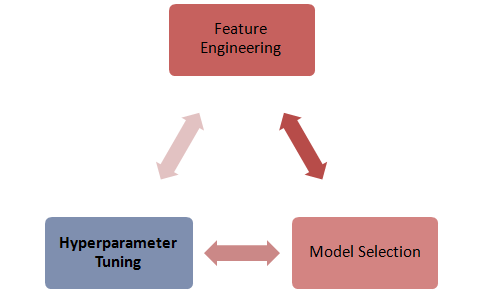

For the sake of simplicity, we can divide the analytical aspects of a data science project into three parts:

- The first part would be all about gathering the required data and engineering the features.

2. The second part would revolve around choosing the right machine learning model.

3. The last part would be around finding the optimal hyperparameters.

Let?s understand the third part better because not only tuning hyperparameters is considered a black art, it is also a tedious task and takes time and effort.

This article aims to explain what grid search is and how we can use to obtain optimal values of model hyperparameters.

This is where the grid search can be extremely helpful because it can help us determine the optimal values in an elegant manner.

2. What Is A Hyperparameter?

A machine learning model has multiple parameters that are not trained by the training set. These parameters control the accuracy of the model. Therefore, the hyperparameters are particularly important in a data science project.

The hyperparameters are configured up-front and are provided by the caller of the model before the model is trained.

As an instance, the learning rate of a neural network is a hyperparameter because it is set by the caller before the training data is fed to the model. On the other hand, the weights of a neural network are not its hyperparameters because they are trained by the training dataset.

Furthermore, consider the Support Vector Classification (SVC) model which is used to classify data sets. There are a number of hyperparameters that the model requires.

Subsequently, the scikit-learn library version of SVC can be set up with a large number of hyperparameters, some of the common parameters are:

- C: This is a regulisation parameter

- Kernel: We can set the kernel parameter to linear, poly, rbf, sigmoid, precomputed or provide our own callable.

- Degree: We can pass in a custom degree to support the poly kernel parameter.

- Gamma: This is the coefficient for rbf, poly and sigmoid kernel parameter.

- Max_Iter: It is the maximum number of iterations for the solver.

Consider that we want to use the SVC model (for whatever reason). Setting the optimal values of the hyper-parameters can be challenging and resource-demanding. Imagine how many permutations we need to determine the best parameter values.

This is where Grid Search comes in.

3. What Is Grid Search?

Grid search is a tuning technique that attempts to compute the optimum values of hyperparameters. It is an exhaustive search that is performed on a the specific parameter values of a model. The model is also known as an estimator.

Grid search exercise can save us time, effort and resources.

4. Python Implementation

We can use the grid search in Python by performing the following steps:

1. Install sklearn library

pip install sklearn

2. Import sklearn library

from sklearn.model_selection import GridSearchCV

3. Import your model

from sklearn.svm import SVC

4. Create a list of hyperparameters dictionary

This is the key step.

Let?s consider that we want to find the optimal hyperparameter values for:

- kernal: We want the model to train itself on the following kernels and give us the best value amongst linear, poly, rbf, sigmoid and precomputed values

- C: we want the model to try the following values of C: [1,2,3,300,500]

- max_iter: we want the model to use the following values of max_iter: [1000,100000] and give us the best value.

We can create the required dictionary:

parameters = [{‘kernel’: [‘linear’, ‘poly’, ‘rbf’, ‘sigmoid’, ‘precomputed’], ‘C’: [1,2,3,300,500],’max_iter’: [1000,100000]}]

5. Instantiate GridSearchCV and pass in the parameters

clf = GridSearchCV( SVC(), parameters, scoring=’accuracy’ )clf.fit(X_train, y_train)

Note: we decided to use the precision scoring measure to assess the performance.

6. Finally, print out the best parameters:

print(clf.best_params_)

That?s all.

We will now be presented with the optimal values of the hyperparameters.

The selected parameters are the ones that maximised the precision score.

5. Summary

This article explained how to use the Grid Search to obtain optimal hyperparameters for a machine learning model.