In this post I share my experience with overclocking a popular Intel i7?8700k processor from Intel?s so called 8th generation aka Coffee Lake. Besides being a first 6-core consumer CPU from Intel, it has some process improvements which makes it suitable for overclocking. Intel?s z370 chipset comes to assist.

Update 1: With 5.1 GHz, I have onces managed to freeze a system under heavy DL load, so reduced to 5.0

Update 2: Since this post attracts a steady influx of visitors, I decided to list all my BIOS settings for your reference ? see at the end of the post.

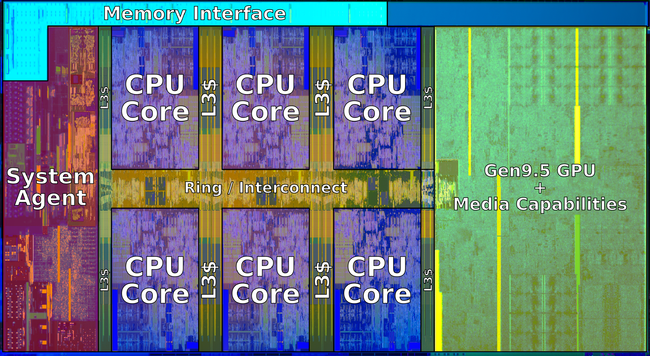

i-8700k die (Wikichip.org)

i-8700k die (Wikichip.org)

Little bit of history

My first OC?ed system was Pentium 120. CPU frequency multiplier had to be set manually with a combination of jumpers on the mainboard. I bought a Gigabyte motherboard, then asked my more experienced colleagues their opinion on the brand. ?Just a usual Taiwanese noname, man! You gambled, hope you are a lucky one!? Nobody new it in 1996. I have set jumpers to 120 and started it even w/o a CPU fan, just for testing. It worked, but I screamed when touched the CPU ? it was so hot. I have then set jumper caps to overclock to 150 ? worked. No multiplier lock was invented back then. My second OC?ed CPU was Celeron 300A in a Slot1 form-factor. This was the first introduction of the Celeron line. Intel differentiated it from Pentium line by lower FSB ? 100 vs. 133 for Pentium II and III. However the Celerons had larger cache. One setting in BIOS could change FSB to 133 and 300A would become Pentium II 450 instantly. In fact, a bit superior because of the larger cache. The board was an iconic Abit-BH6 which served me almost 10 years due to unique upgradeability potential of the Intel platform back then. It supported Celeron 1400 as its max with just a BIOS update and a Slot1 adapter. Which was almost 5 times the CPU it originally supported! The RAM maxed out at 768 MB. Nowadays I?d say, overclocking is a mixture of art and science, as there are so many knobs to turn, that it?s only possible to do something using lengthy trial-and-error method.

Also, you may or may not be able to repeat some one else?s success, depending on your particular chip ? some may be less overclockable then others. This is called ?silicon lottery?.

My setup

I was choosing between Rysen 2700X and 8700K, but eventually the deciding factor was built-in graphics of Intel?s chip. Since I only wanted to use a graphics card for Machine Learning, I assumed I should not share it with a display graphics. I?d probably have to buy an extra one, if I had Rysen. Ok, AMD will surely have my money when I shop for a headless devbox.

I have then picked a rather inexpensive 64Gb 3200MHz set from GSkill and got an Asus Prime Z370-A motherboard listed on the memory QVL. The board had a rare set of 3 16x PCIe slots, since most of boards I checked out only had two 16x slots, with the rest being 1x slots. I wanted to have an option to stick in 2 graphics cards and add my Intel PCIe storage card that I bough a while ago. Such configuration would run with 8+4+4 processor PCIe lanes respectively. If I trade my Intel PCIe storage card for a NVMe SSD that can use the chipset?s lanes, my remaining two cards could use 8+8 CPU lanes. A single graphics card could use full 16 CPU lanes.

I added a Super Flower Ledex II 750 Watts power supply, Alphacool Eisbaer LT240 all-in-one water cooler, and a spacious Fractal Design S case.

Intel i7?8700k CPU

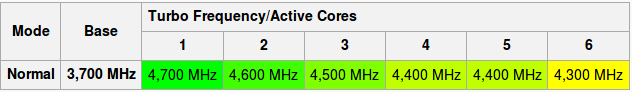

Intel?s turbo mode is remarkable.

source: wikichip.org

source: wikichip.org

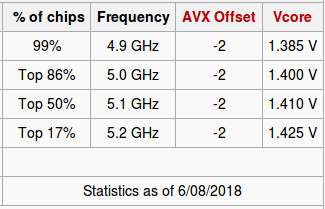

One can see that that the boost to only 4300 is guaranteed when using all 6 cores. We however will aim at 5,000 MHz max-turbo mode for all cores, even though not all chips are capable of that speed, according to statistics.

source: wikichip.org

source: wikichip.org

I have delidded the CPU right after making sure it works. This is not such a big deal, and anyone who could put a PC together from parts should definitely be able to perform this delicate operation, given the right tools at hands. I have put Grizzly Conductonaut between the CPU die and the inner surface of the lid.

Using the right tools

I have settled on Intel?s LINPACK as a torture tool with occasional use of prime95. These are very well written pieces of software and they impose a lot of load on the system via use of AVX/AVX2 instruction sets. The tools are pretty good at pushing the system to its limits and finding instabilities. If your system does not hang, reboot or produce math errors during these tests, you are assured trouble-free execution of whatever apps you have.

I have also put together a 30 minute test of some numpy and sklearn functions resembling typical Machine Learning workflow.

On the monitoring side, I used PCM, turbostat and i7z. Cudos for the latter for being able to show core voltage.

Full review on the Linux overclocker?s toolbox is in another post: Over-clocking under Linux

I also used a wall socket power meter as objective measure of power consumed by the system.

Synthetic vs. real life workflows

LINPACK and prime95 are pretty resource demanding, but do you really want to solve systems of tens of thousands linear equations on your desktop all day long? The answer is, of course, depending what you do on your desktop besides typical web browsing. Video editing? Perhaps, you need to test video encoding software. Gaming? Then use gaming benchmarks.

I?d like to do Data Science. I found typical Machine Learning code from Python?s sklearn not too tasking. Even Xgboost and LightGBM, that use openmp to run on all available CPUs use AVX family instructions scarily, leading to rather cool temperatures.

So, in order to be able to measure impact of changing settings in my real life scenarios, I have put together a benchmark, that applies some common algorithms to synthetic (generated on the fly), but realistically looking data.

More on this benchmark and its results in my another post.

Struggling for stability

It turned out that it?s not that difficult to make my system running at 5GHz, but it was rather difficult to make it running stable under extreme load.

Intel?s stated TDP certainly not account for maximum load a processor can take, not even on the base advertised frequency. Want to prove it? Run LINPACK and see your power draw exceeding the stated TDP value, and possibly see your processor throttling under the base speed, if you don?t have adequate heat removal setup. For this reason, MB vendors all have ?AVX offset? in their BIOSes ? it will drop CPU frequency multiplier by this offset, so the CPU runs cooler and can sustain the load.

Part of the problem was that MB vendors want to make overclokers? life easier and make many options Auto, with some default settings and default behavior. Not only these settings may be unknown, and not optimal, but the logic behind some Auto algorithms is unknown or too power hangry. For this reason, experience overclockers advise to set the most critical options, such as Vcore voltage manually.

I have felt this on my own skin, when I discovered, that my system is heavily throttling the CPU with default (out of the box!) BIOS settings. Carlyle from Asus ROG Forum suggested that 64GB of RAM is too tasking for the system, and Asus algos want to support the memory controller with more voltage, which leads to overheating and thermal throttling. He suggested to remove some RAM and check if I?d still have throttling. With 32GB I still observed throttling, while at 16GB everything was just fine! So I took it then, that since most gamers do not require more than 16GB of RAM, vendors also test with typical 2x8GB combos, and I am on my own with my 4x16GB. On another thought, Intel memory controller still has some blame too, since it?s true indeed, that only small amounts of RAM can be overclocked to extreme (4+ GHz) speeds on a current Intel platform. Also, remember Mac users? uproar about Macbook?s 16GB RAM limit a while ago? This must be the same story ? large RAM would eat disproportionately more power.

For the same RAM reasons, I suppose, recipes from der8auer and The Sentinel failed on me ? the system was not stable or was throttling under LINPACK load.

On the way to 5+ GHz

Asus EZtune, 5G profile from the BIOS, recipes from der8auer and The Sentinel failed on me ? the system was not stable or was throttling under LINPACK load. The settings were just too aggressive. So I had to fully understand what?s going on.

It?s important to understand, that setting up voltages (Vcore, VCCIO, System Agent) is not enough ? they are not stable and drop under load. They can be supported by LLC ? Load Line Calibration, that can compensate more or less aggressively (level 1?7). Undervoltage leads to system instability, overvoltage leads to overheating, throttling and instabilities as well.

Thus, you are optimizing a target max-turbo frequency, Vcore, VCCIO, VCSA, and LLC.

One would set them up in Vcore manual mode, trying to minimize LLC level and vcore to make system that is stable at the minimal power consumption.

Then some folks also overclock RAM, but I settled down on my stock XMP profile. Aiming at higher than 3200MHz speeds would unlikely get a lot of performance for 64GB kit.

Finally, there is an option to overclock cache (or uncore) speed, but I just left these setting all at auto. I have to admit I didn?t want to spend to much time on overclocking. While it?s fun, there is always some work to do.

I have settled down on Adaptive vcore mode, that lets SVID to adjust the voltage, and then one can configure some offset to the resulting vcore. I also let some moderate LLC levels to help boost VCCIO and VCSA in case of load, since too high voltage and higher current would definitely lead to overheating.

It?s important to note, that higher voltages and frequencies do not lead to extra power consumption in the absence of load! My system has idle power consumption about 30Watts (at wall socket), while it consumes 75 Watts during single core load (other cores are in C1(HALT) or deeper states), and could reach 110 W with Logistic Regression, 160 W with LightGBM and XGBoost, and 260 W or more under LINPACK (unstable at 5HGz though).

AVX dilemma

I could run the usual ML workflows and even prime95 at 5GZ with no AVX offset, but I could not run LINPACK at 5GHz. LINPACK was stable at 4.8 GHz (AVX offset 2), and even then would sometimes produce few LINPACK errors, making a test failed. XGBoost, LightGBM could happily run at 5.1 GHZ, all not too hot.

If I leave the AXV offset at 2, I surely loose some of numeric performance, since AVX is indeed used here and there across numpy, so my CPU would have to switch back and force between frequency states, and this is not so good ? it will also put a core into HALT states briefly.

I have decided to leave the settings at 5.0 GHz with no AVX offset thinking that I?ll know what to do if I meet a real life app that would push my CPU to an instability zone.

Resulting settings

AI Tweaker menu:

XMP Profile ? DDR4?3200

BLCK Frequency ? 100,0 (unchanged)

ASUS multicore enhancement ? Disabled

SVID Behaviour ? Best-Case Scenario

AVX Offset 0

CPU core ratio ? Sync All Cores

1-core ratio limit ? 50

BLCK Frequency DRAM Frequency Ratio ? Auto

DRAMM odd Ratio Mode ? Enabled

DRAM Frequency ? DDR4?3200 MHz

TPU ? Keep Current Setting

Power Saving & Performance Mode ? Performance Mode

CPU SVID Support ? Auto

Ai TweakerDIGI+VRM Submenu:

CPU LoadLine ? Level 5

CPU Current Cap ? 140%

<the rest are default: Auto>

Ai TweakerInternal CPU Power Management submenu:

Intel SpeedStep ? Enabled

Turbo Mode ? Enabled

Long Duration Package power limit ? 130

Package Power Time Window ? 1

Short Duration Package Power Limit ? Auto

IA AC LoadLine ? 0.01

IA DC LoadLine ? 0.01

CPU Core/Cache Current Limit Max ? 255.50

CPU Core/cache Voltage ? Adaptive Mode

- Offset Mode Sign ? ?-?

- Additional Turbo Mode CPU Core Voltage ? 1,370

- Offset Voltage ? Auto

DRAM Voltage ? 1.3530

CPU VCCIO Voltage ? 1.15000

CPU System Agent Voltage 1.17500

Advanced Menu:

HyperThreading ? Disabled

Tcc offset time window ? 5 sec.

This combination produces CPU core voltage 1.4 under load.

Links

Wikichip article

de8bauer overclocking 8700K with ASUS MB

The Sentinel overclocking 8700K with ASUS MB

Kabylake overcloking guide from Asus guru

Tweaktown overclocking guide

BIOS settings guide (in Russian)